|

|

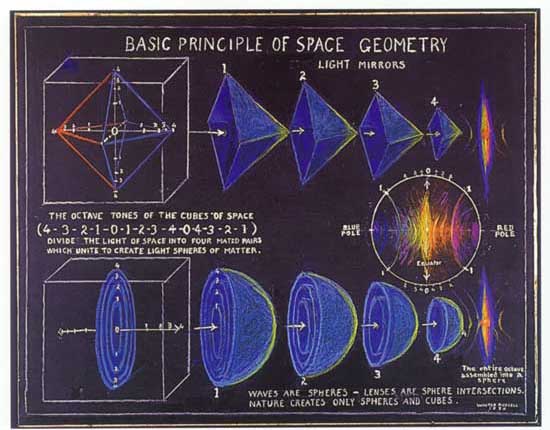

Sacred Geometry, fractals and holographs, represent the the unification of everything. Until now we have had disjointed ideas about reality that don't see how everything is related and equal.

My Fractal Pattern Theory, or FPT is going to solve all of mankind's problems and make me rich famous. I think FPT is going to be so tantamount to our civilization that it will eventually lead to the ever elusive great unifying theory. It is a very simple theory but for some reason it doesn't appear to have been discovered yet. To me it looks like a ripe cherry of knowledge just starring me in the face as I happenstanced upon it. I feel very fortunate to have been the one to have discovered it; now I have to figure out a way to disseminate it.

The Fractal Pattern Theory obviously comes from fractals, so I will explain what they are first. A fractal is a beautiful natural phenomenon where a pattern

|

|

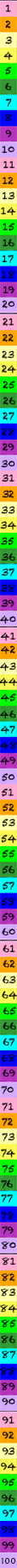

6 Nature

1 Astronomical Objects

| 1 Solar system plants diameter |

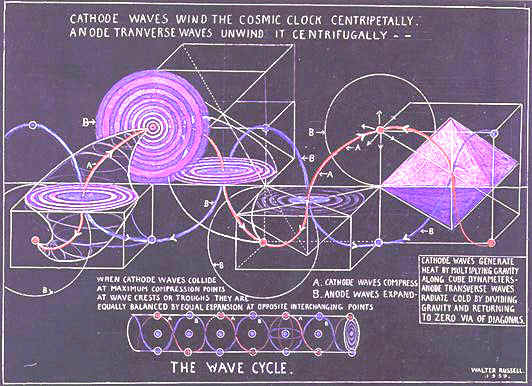

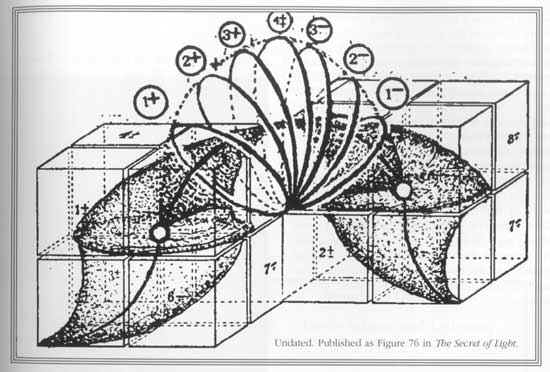

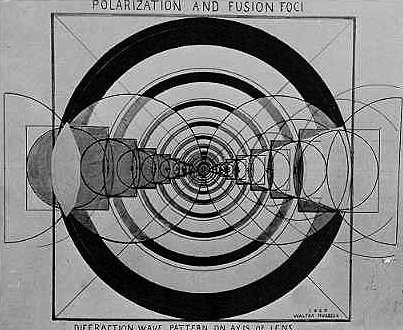

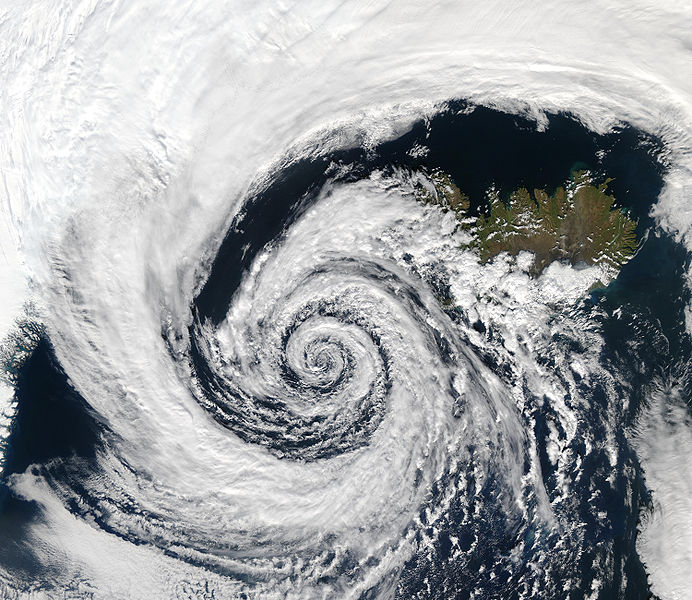

| 2 How waves Travel |

| 3 Lives of Stars |

|

| 2 Body |

| 3 Earth

|

|

6 Body

1 Introduction

|

| 2 I |

| 3 H |

| 4 C |

| 5 W |

| 6 A |

| 7 P |

|

|

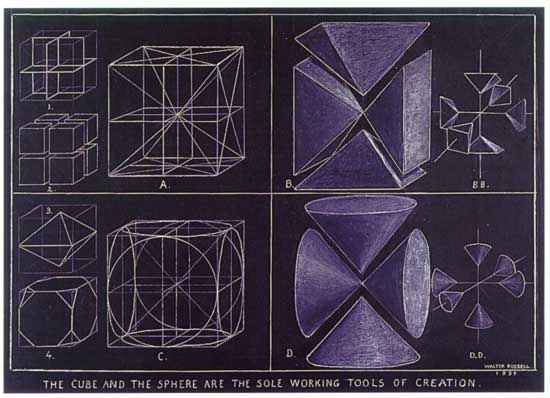

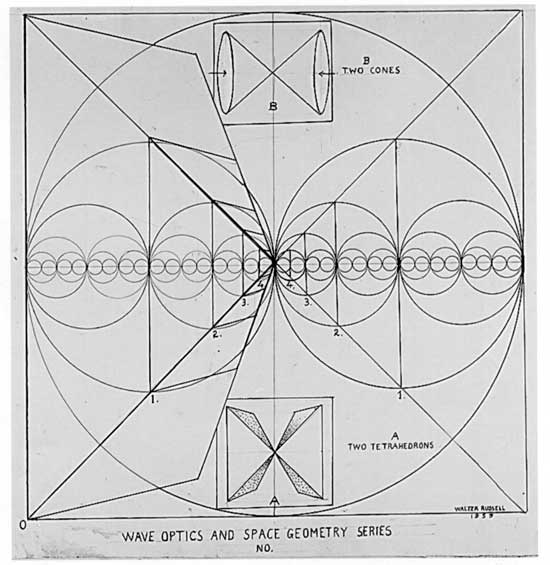

1 Sacred Geometry

| 1 Interconnecting web of life

|

| 2 Vortex |

3 Theories

| 1 Unified field theory |

| 2 Particle in a Box |

| 3 Relativity & Quantum Mechanics |

| 4 Ptolemy's theorem |

|

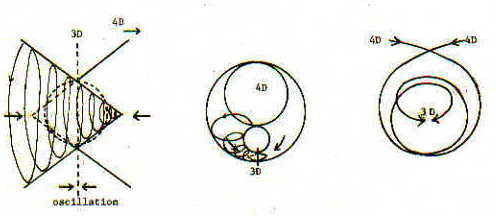

| 4 Higher Dimensions |

| 5 Gravity VS Electromanetizm |

| 6 Swartzchild Equation |

| 7 Symmetry |

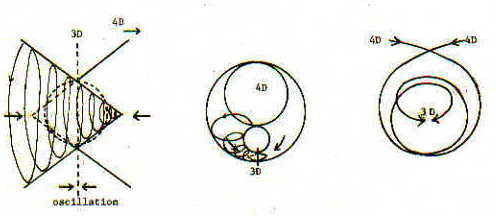

8 Dimensionality

| 1 Fractal |

| 2 Golden Ratio 1.618 |

|

9 Perfect Right Triangle

|

| 10 Gravitational singularity |

| 11 Curvature |

| 12 Naked Entropy |

| 13 Modern Progress |

| 14 Quantum Mechanics VS Classical Physics |

| 15 Mathematical Formulation |

| 16 Angles |

| 17 Radii, area, and volume |

| 18 Dual polyhedra |

| 19 Symmetry groups |

| 20 Technology |

| 21 Related Polyhedra & Polytopes |

| 22 Uniform Polyhedra |

| 23 Tessellations |

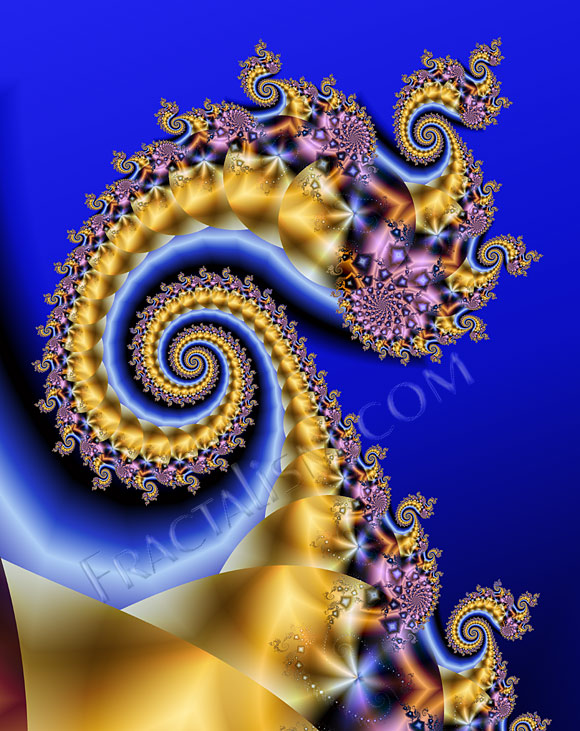

| 24 Computer generated fractals |

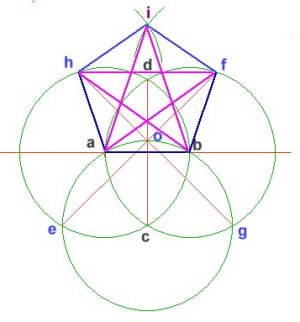

| 25 Golden triangle, pentagon and pentagram |

| 26 Scalenity of triangles |

| 27 Relationship to Fibonacci sequence |

| 28 Decimal expansion |

| 29 Mathematical pyramids and triangles technology |

| 30 Related polyhedra and polytopes |

| 31 Uniform polyhedra |

|

|

1 Architecture

|

2 Painting

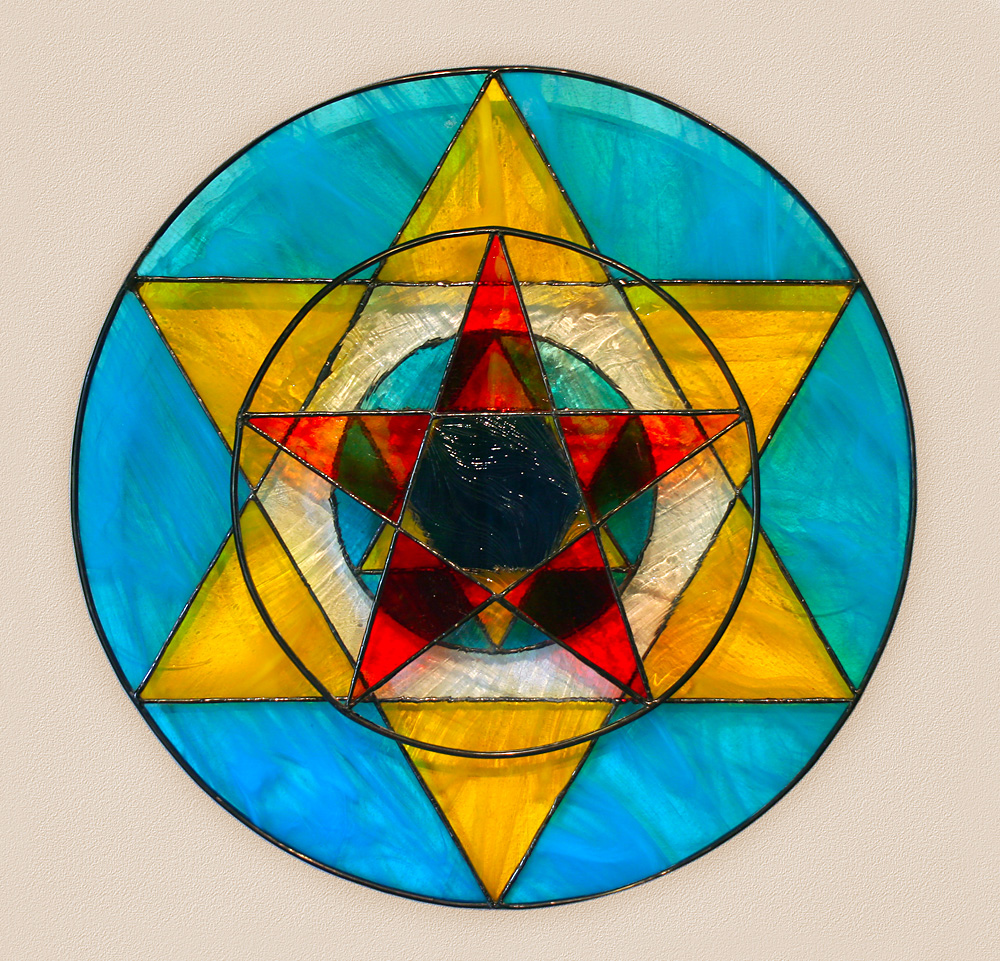

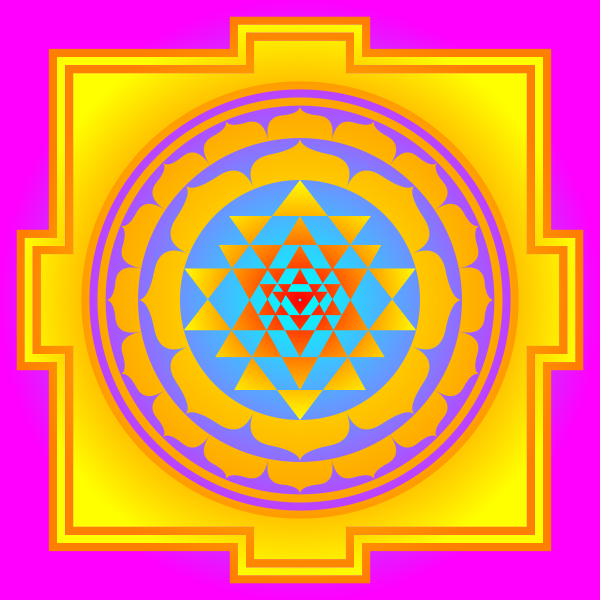

1 Mandala

|

2 Religion

|

2 Buddhism

| 1 Early & Theravada |

| 2 Vajrayana |

| 3 Shingon |

| 4 Nichiren |

| 5 Pure Land |

|

3 Christianity

|

4 Bora ring |

|

|

3 Music |

|

|

| 1 Sphere |

| 2 Circle |

| 3 Point |

| 4 Square Root of 2 |

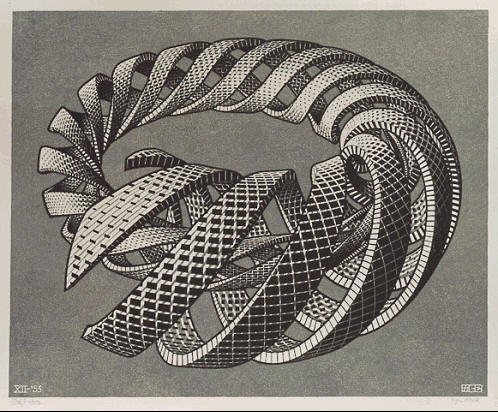

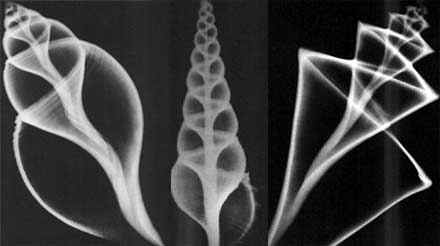

| 5 Spiral |

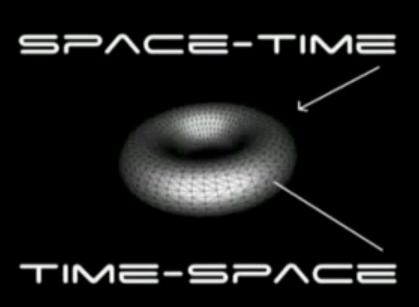

| 6 Torus |

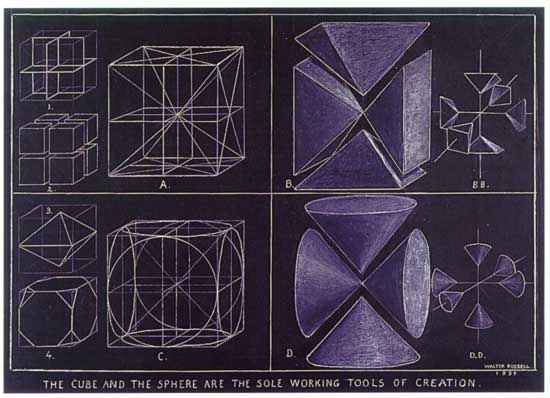

| 7 Cone |

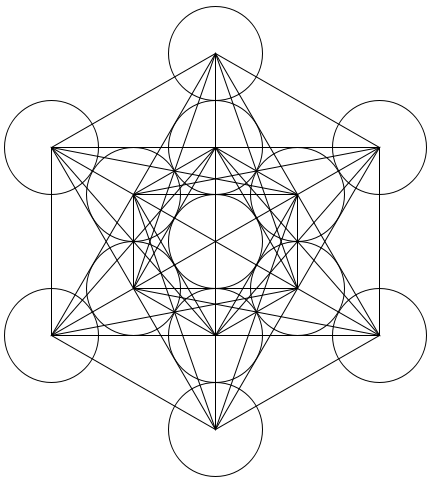

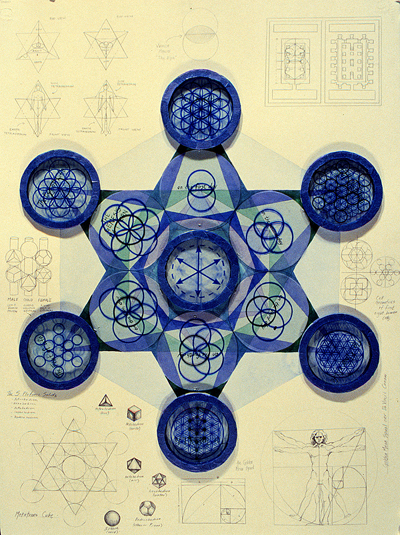

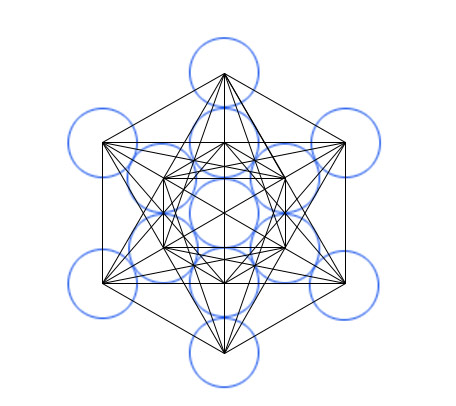

| 8 Metatron's Cube |

| 9 Pyramids |

|

5 About

1 Introduction

|

| 2 Interpretation |

| 3 History |

| 4 Current Status |

| 5 Worldview |

| 6 Applications |

| 7 Philosophical Consequences |

|

|

4 Types of Shapes

|

2 Archimedean Solid

| 1 truncated tetrahedron |

| 2 cuboctahedron |

| 3 truncated cube |

| 4 truncated octahedron |

| 5 rhombicuboctahedron |

| 6 truncated cuboctahedron |

| 7 snub cube |

| 8 icosidodecahedron |

| 9 truncated dodecahedron |

| 10 truncated icosahedron |

| 11 rhombicosidodecahedron |

| 12 truncated icosidodecahedron |

| 13 snub dodecahedron |

|

|

|

1 Sacred Geometry

God is like a tree

Torus

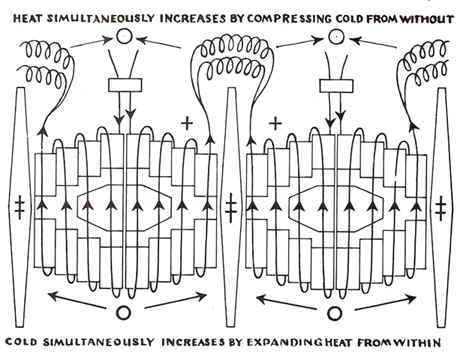

Waves talking to each other

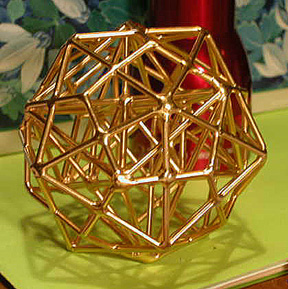

Polyhedron

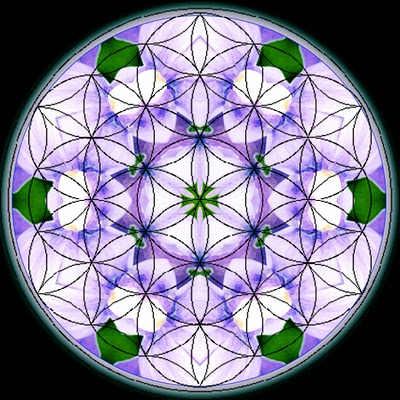

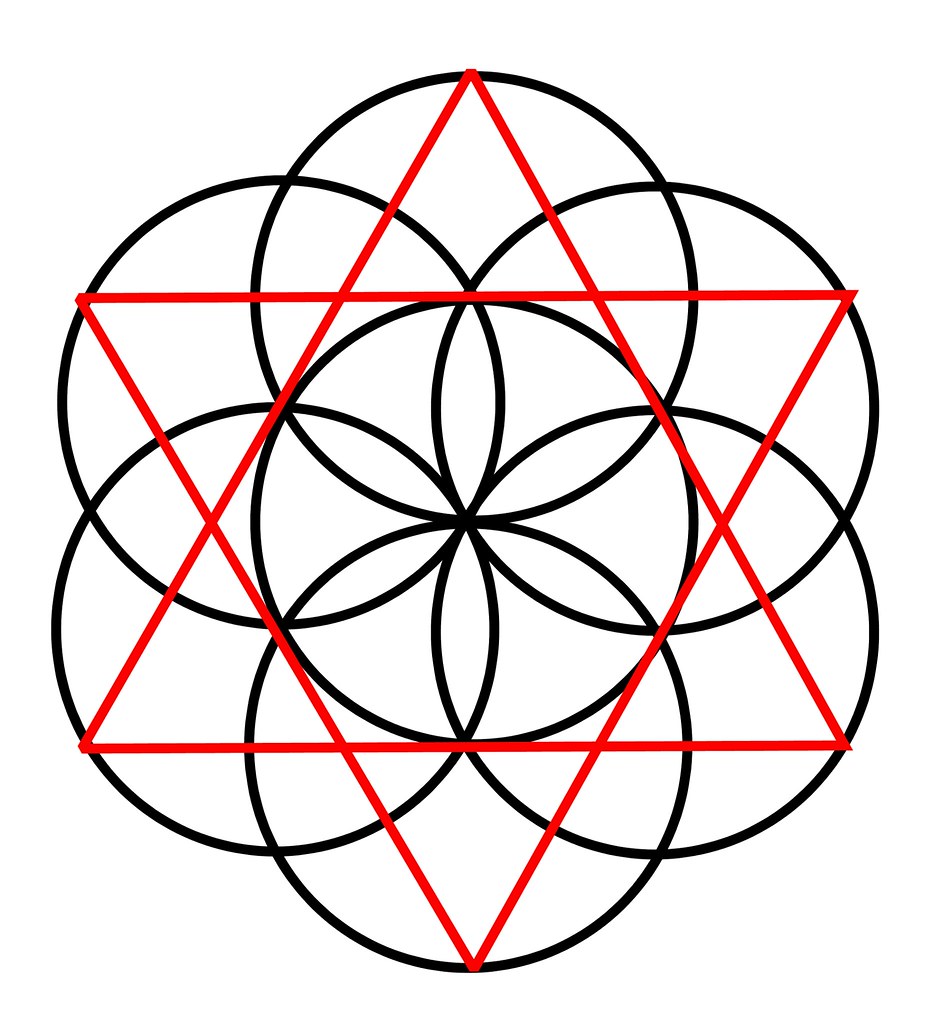

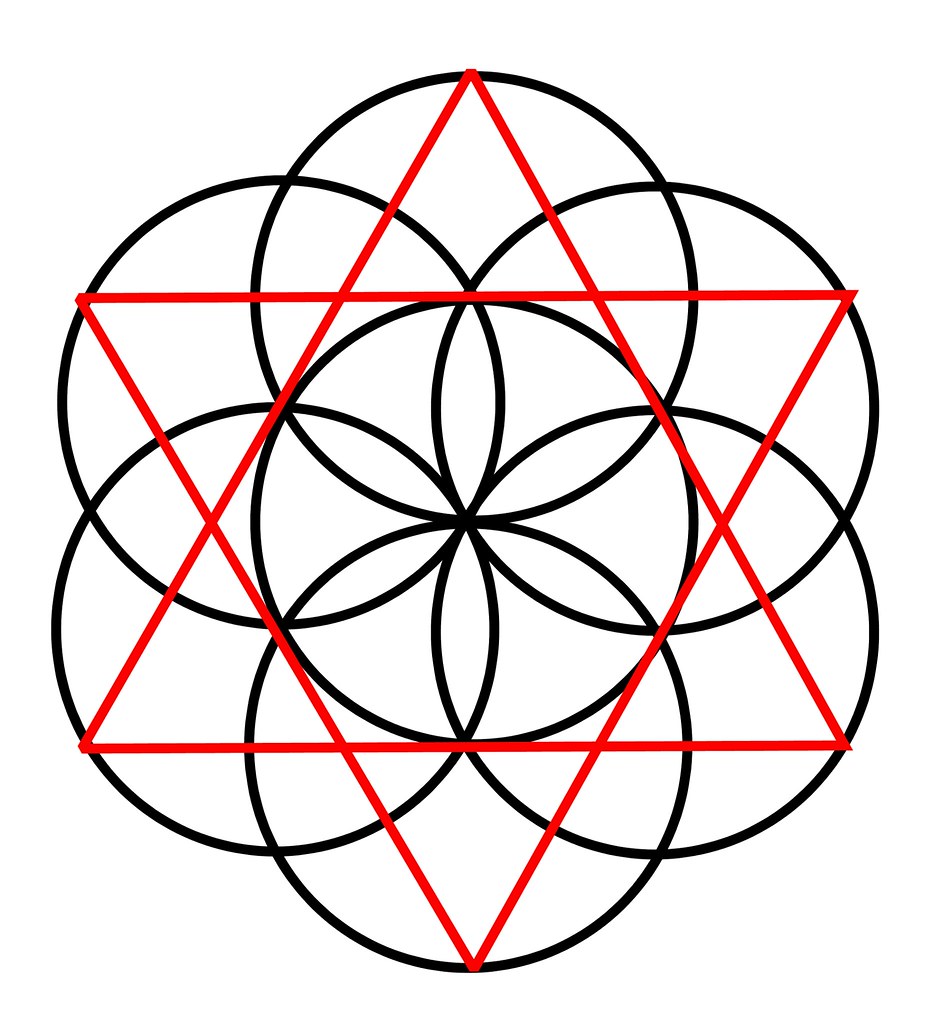

The flower of life

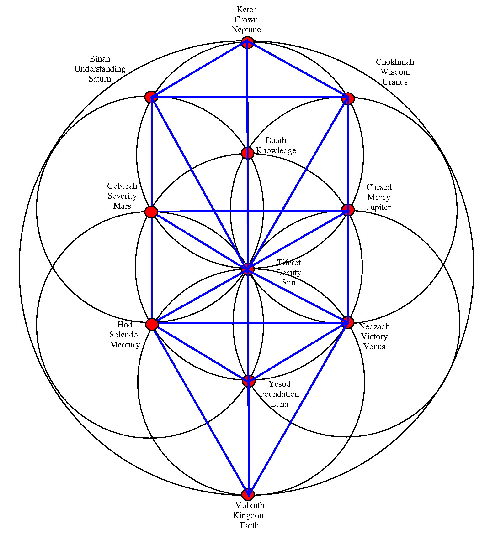

Flower of life and tree of life

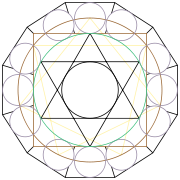

Sextagon

OM

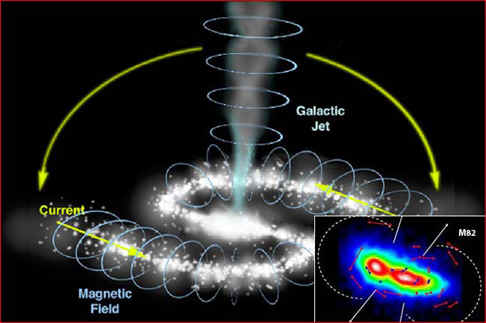

Galactic jet

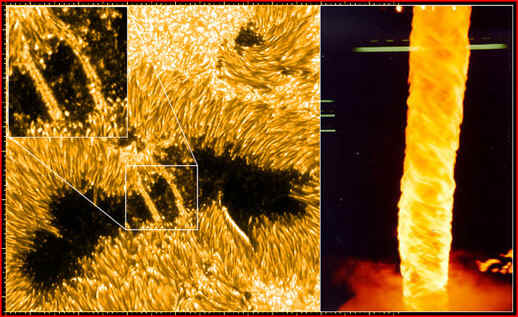

Twisting corona

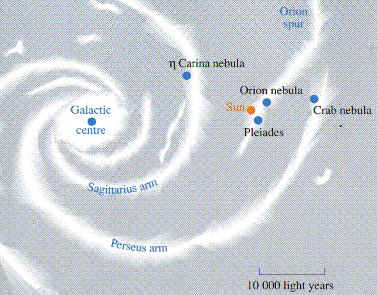

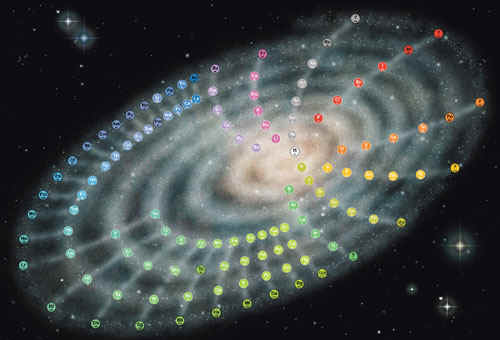

Our spiraling galaxy

Space time

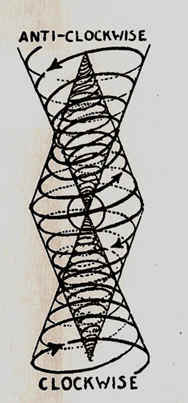

Wave travel

Cause of the Magnetosphere

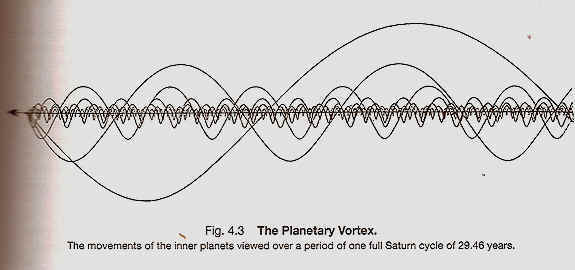

The solar system traveling though space

How energy spins in and out of the sphere

Spirla in the galaxy

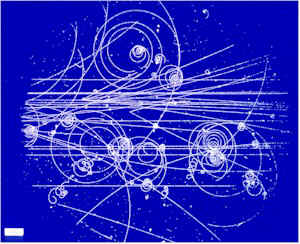

Photos from the Bubble chamber

Torus

Torus dynamics

Eearth grid

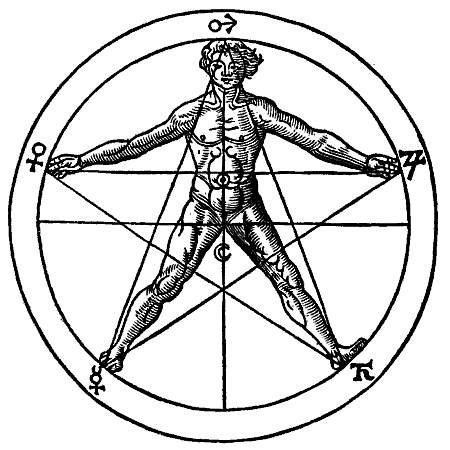

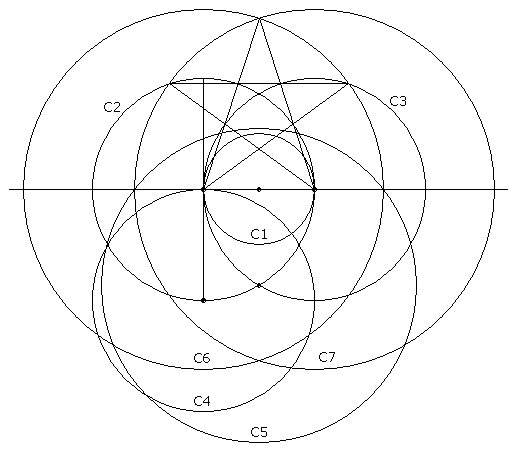

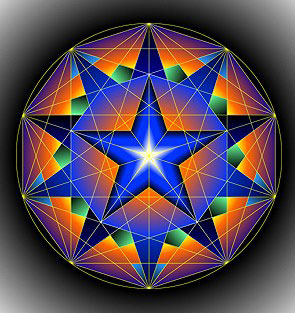

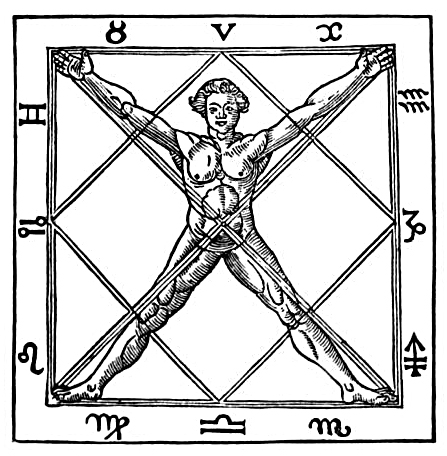

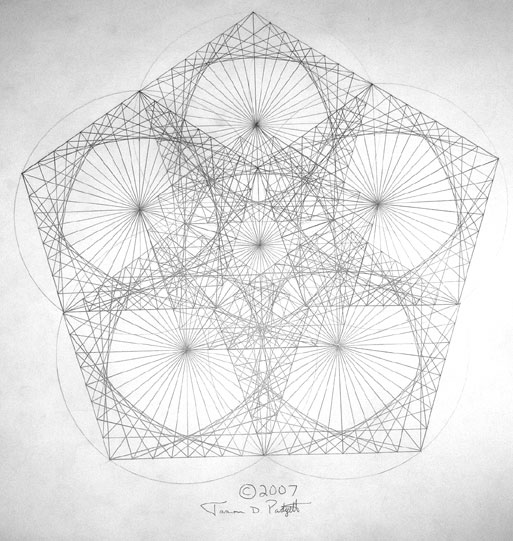

Pentogram drawing

Sextogram and flower of life

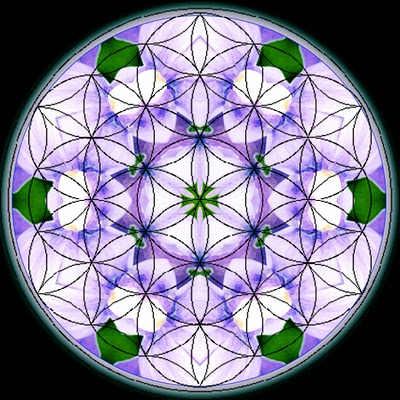

Intricate flower of life

Sextogon inside Docegon

Septogram and Septogon

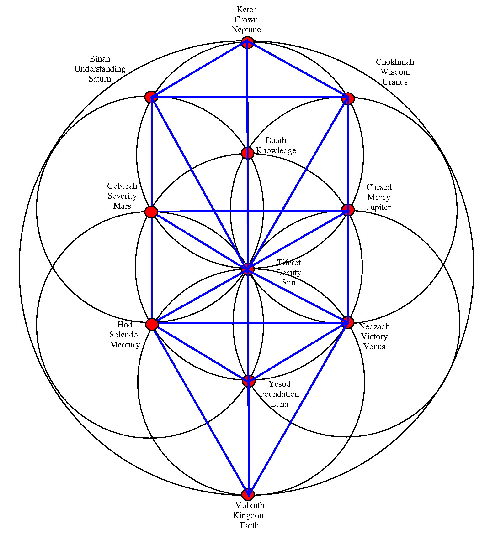

Tree of life point meanings

Septogram and Septogon

Pentogram with the body

Star fractal

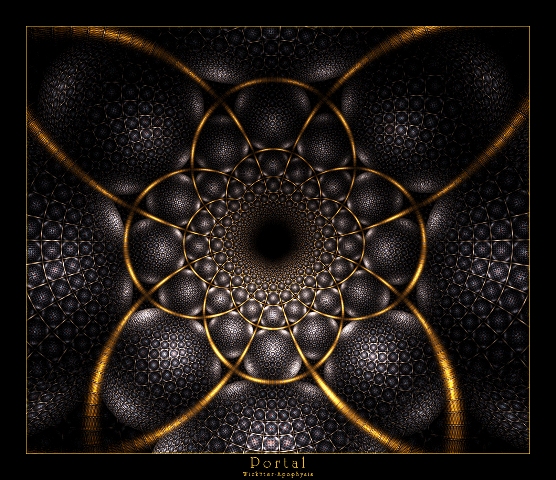

Digital art fractal

Computer generated Golden Mean fractal

Computer generated rainbow fractal

Fractal portal art

Computer generated star fractal

Golden mean spiral

Vortex sprirals created by fish

Black hole

Relationship between time and space

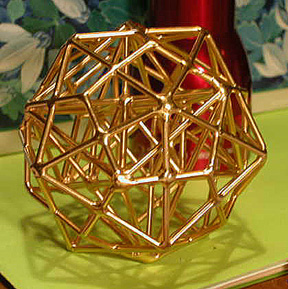

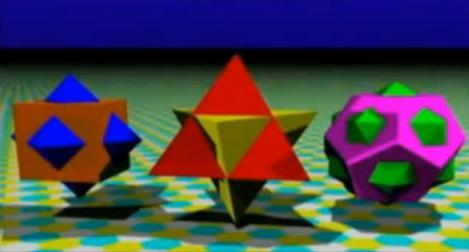

Platonic solids

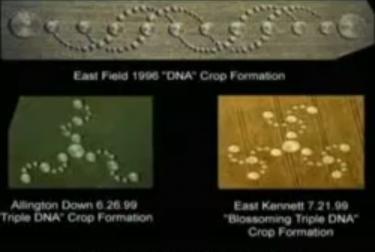

DNA

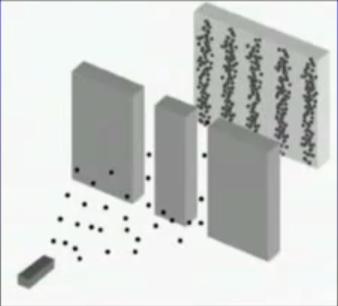

Double slit experiment with particles

Pentagon on the Earth

VE sphere

3D flower of life

Petals

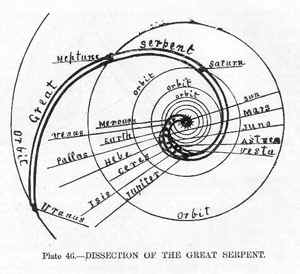

Solar System Planets Diameter |

- It is 12 light hours across. Since the speed of light is 300,000 km/second or 186,000 miles/second, 12 light hours is 12,960,000,000 kilometers, or 1.296 X 10 ^ 10, or 12 billion and 960 million kilometers, OR 8,052,970,651.4 miles.

- Diameter of the earth = 12 756.2 kilometers

- Diameter of Mercury = 4879 km at its equator

- The diameter of Mars is 6792 km at the equator and 6752 km at the poles. Mean Diameter = 6772 km.

- Venus Diameter: 12103.6 km (7700 miles) ...

- Diameter in jupiter 142800 km.

- Saturn's diameter is around 75000 miles 120000 kilometers .

- Diameter of Uranus is 51118 km

- Pluto's diameter as 2274 kilometers (1413 miles)

- 12.1 nm, which is enough for the membrane thickness plus a M13 virus diameter Bacteria measure from 0.2 – 0.3 microns in diameter

- Many of the spherical bacteria are about 1 micrometer in diameter

- Diameter of red blood cell 0.0076mm

- The average cell in our body is about 50 micrometers (0.05 mm) in diameter

- The diameter of human hair ranges from 17 to 181 µm. micrometer

- The dermis also varies in thickness depending on the location of the skin. It is .3 mm on the eyelid and 3.0 mm on the back.

- Whereas the thinnest walls, about 50 nm, are found in inner cell layers. |

Large and Small |

- 100 B galaxies visible

- 30% disc shaped galaxies like the milky way

- %60 ellipticals, smaller and larger than milky way, some with thousand of billions

of stars

- 10% irregular galaxies universe growing at 600 km per second, no center, 13

B years

- There are black holes millions of times more massive than the sun and shoot

out electromagnetic radiation

from quasars

* Farther light travels the longer the wave length

- 1/10,000 of a second after big bang subatomic stuff formed

- After 500,000 years electromagnetic radiation and matter went their separate

ways, 1,000 B degrees, photons

were particle verses anti particles pairs, this happened as universe cooled

for every billion anti protons

there were a B and 1 neutrons, these leftovers formed known universe. The rest

canceled each other out and are back ground photon radiation

- 4 minutes after time zero hydrogen and helium nuclei formed, (alpha particles)

5 - 300,000 after time zero the atoms formed, causing electromagnetic radiation

to move feeling through matter.

- Ultimately total energy of Universe is zero because it all cancels each other

out, the negative energy

is gravity causes kinetic energy of motion, these together first forever

- At 10(-43) seconds old universe was 10 (94) grams cm (3) and 10 (-33) cm across

- Universe doubled in size in 5 B years

- Force of gravity between 2 objects infinity apart is 1 by infinity squared

- 99% universe is dark matter, unaffected by all forces but gravity, photons,

wimps, spread out evenly in universe

unlike visible matter go through us at 100 k per second

- 45 BB molecules in CM (3) of air at 0 degrees Celsius

- PROTON vs. H-ATOM SIZE: The diameter of a single proton has been measured and is found to be 10^-15 meters. The diameter of a single hydrogen atom in its ground state is 10^-10 meters. So the RATIO of the size of the hydrogen atom to the size of the proton is 10^5.

- DNA molecules have a consistant width of about 2.5 nanometers

- The diameter of a C60 "buckyball" is about 10 Angstrom (1 nanometer).

- The diameter of an atom ranges from about 0.1 to 0.5 nanometer

- A proton has a diameter of approximately one-millionth of a nanometer

- Diameter of red blood cell is 0.0076mm

- The diameter of the nucleus is in the range of 1.6 fm (1.6 × 10−15 m) (for a proton in light hydrogen) to about 15 fm (for the heaviest atoms.

- Central part of an atom or nucleus, has a diameter of about 10-15m or 1.6 fm in ...

- Galaxy with a diameter of about 100000 light-years containing about 200 billion stars.

- In the model km m cm diameter of Sun 1400000 23 (ball) distance from Sun to ...

- Earth's Diameter at the Equator: 7926.28 miles (12756.1 km)

- The Sun's diameter is 1.4 million kilometers

- A light-year is about 9461000000000 km.

- Diameter of the moon = 3 474.8 kilometers |

Big Stars Diameter in Kilometers |

- 160K how high satellites are

- Meteors burn up 100K up at 100,000 Kph

- Moon 81 X smaller than earth, 15.5 CM AWAY

- Mercury - 58 M kilos 5000 K diameter solar system 4.6 B years old

- Mars - Half Earths size

- Saturn - 10X earths diameter

- Neptune - 30X as far from sun as earth

- Sun big beach ball 30 inches70 cm across and earth is 75 meters away the

size of a pea, mercury is 30M away the size of a pinhead jupiter is 400 Meters away size of a tennis ball uranus 10

Aussie cent coin 1.5 Km away pluto a speck 18 ks away.

- Sirius 2,335,000

- Vega 4,315,000

- Pollux 11,120,000

- Arcturus 22,101,000

- Super giants 100 - 200 X the suns diameter

- Aldebaran 59,770,000

- Rigel 97,300,000

- Deneb 201,550,000

- Pistol star 450,520,000

- Betelgeuse 903,500,000

- Antares 1,330,000,000

- W chepei 2,644,800,000

- Globular's 10s of thousand to over a million stars, can be thousands per cubic

light year

- Milky way galaxy 946,100,000,000,000,000 10 Billion stars in

milky way, olympic swimming pool with drops of water

- Galactic center 30K light years away

- If the galaxy was as big as Australia stars would be almost invisible specs

200m apart and the solar system

is 20 MM across

- Nebula, hundreds of light years in size |

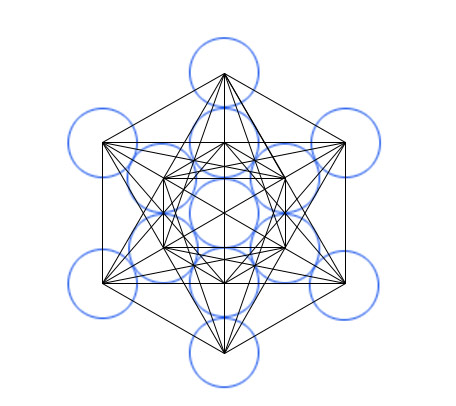

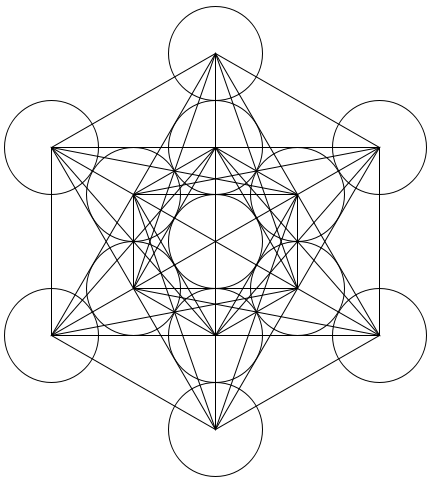

Metatrons Cube |

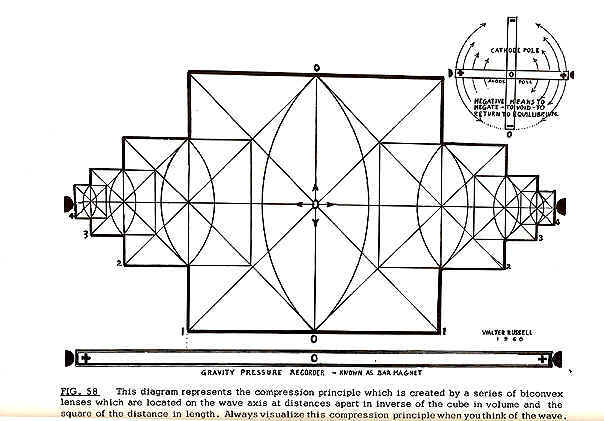

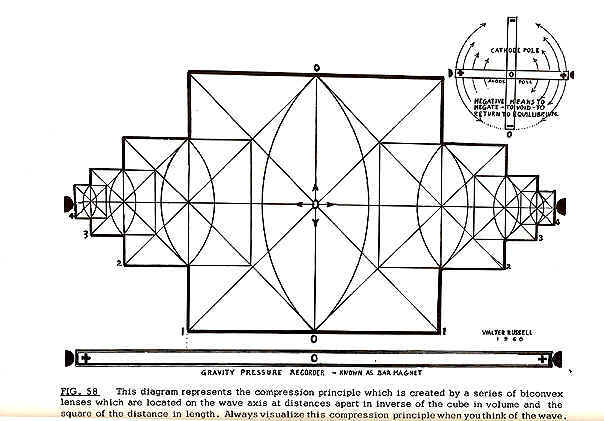

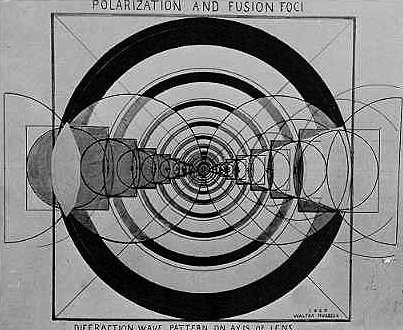

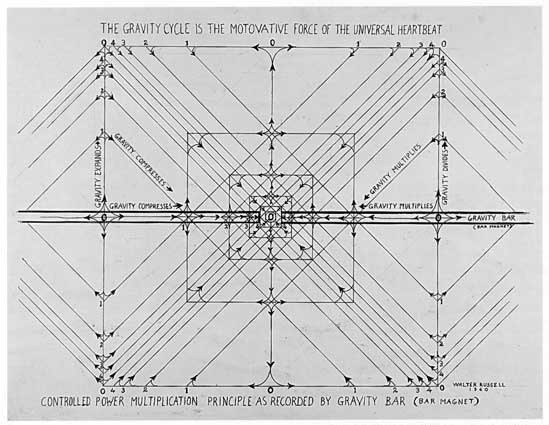

Gravity vs electromagnetisizm  |

Carl Swartzchild - the Swartzchild equation. Swartzchild singularity  |

Sacred geometry is geometry used in the design of sacred architecture and sacred art. The basic belief is that geometry and mathematical ratios, harmonics and proportion are also found in music, light, and cosmology. This value system has been found even in human prehistory and is considered by some to be a cultural universal of the human condition. Sacred geometry is foundational to the building of sacred structures such as temples, mosques, megaliths, monuments and churches; sacred spaces such as altars, temenoi and tabernacles; meeting places such as sacred groves, village greens and holy wells and the creation of religious art, iconography and using "divine" proportions. Sacred geometry-based arts may also be ephemeral, such as found in sandpainting and medicine wheels. |

As worldview |

|

Music  |

The discovery of the relationship of geometry and mathematics to music within the Classical Period is attributed to Pythagoras, who found that a string stopped halfway along its length produced an octave, while a ratio of 3/2 produced a fifth interval and 4/3 produced a fourth. Pythagoreans believed that this gave music powers of healing, as it could "harmonize" the out-of-balance body, and this belief has been revived in modern times]. Hans Jenny, a physician who pioneered the study of geometric figures formed by wave interactions and named that study cymatics, is often cited in this context. However, Dr. Jenny did not make healing claims for his work.

At least as late as Johannes Kepler (1571-1630), a belief in the geometric underpinnings of the cosmos persisted among scientists. Kepler explored the ratios of the planetary orbits, at first in two dimensions (having spotted that the ratio of the orbits of Jupiter and Saturn approximate to the in-circle and out-circle of an equilateral triangle). When this did not give him a neat enough outcome, he tried using the Platonic solids. In fact, planetary orbits can be related using two-dimensional geometric figures, but the figures do not occur in a particularly neat order. Even in his own lifetime (with less accurate data than we now possess) Kepler could see that the fit of the Platonic solids was imperfect, however, other geometric configurations are possible. |

Natural forms  |

Many forms observed in nature can be related to geometry (for sound reasons of resource optimization). For example, the chambered nautilus grows at a constant rate and so its shell forms a logarithmic spiral to accommodate that growth without changing shape. Also, honeybees construct hexagonal cells to hold their honey. These and other correspondences are seen by believers in sacred geometry to be further proof of the cosmic significance of geometric forms. But some scientists see such phenomena as the logical outcome of natural principles. |

Art and architecture  |

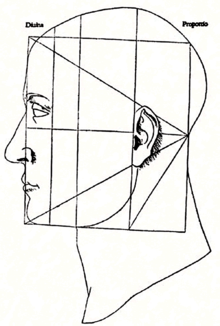

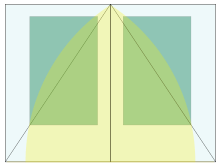

The golden ratio, geometric ratios, and geometric figures were often employed in the design of Egyptian, ancient Indian, Greek and Roman architecture. Medieval European cathedrals also incorporated symbolic geometry. Indian and Himalayan spiritual communities often constructed temples and fortifications on design plans of mandala and yantra. For examples of sacred geometry in art and architecture refer:

Contemporary usage

A contemporary usage of the term sacred geometry describes assertions of a mathematical order to the intrinsic nature of the universe. Scientists see the same geometric and mathematical patterns as arising directly from natural principles.

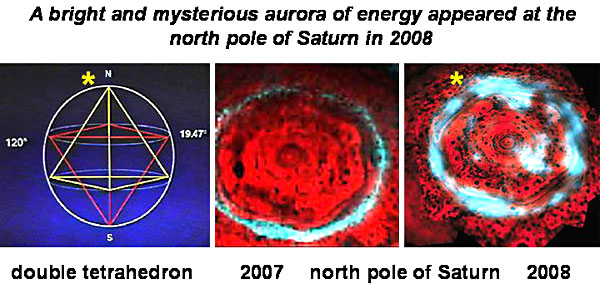

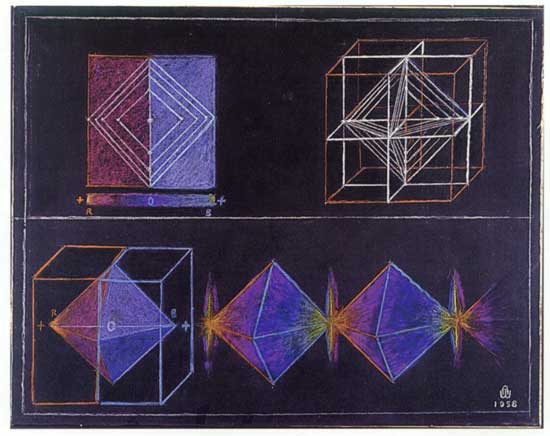

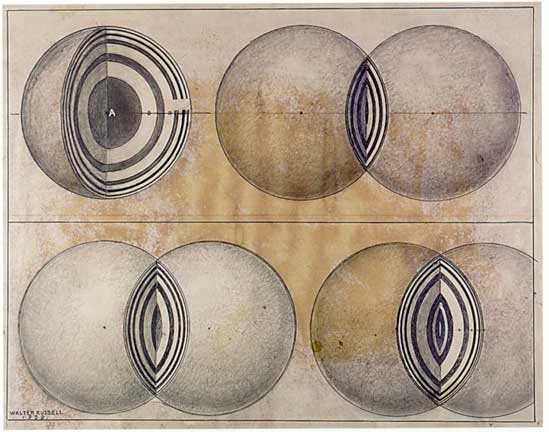

Among the most prevalent traditional geometric forms ascribed to sacred geometry are the sine wave, the sphere, the vesica piscis, the torus (donut), the 5 platonic solids, the golden spiral, the tesseract (4-dimensional cube), Fractals and the star tetrahedron (2 oppositely oriented and interpenetrating tetrahedrons) which leads to the merkaba.

The strands of our DNA, the cornea of our eye, snow flakes, pine cones, flower petals, diamond crystals, the branching of trees, a nautilus shell, the star we spin around, the galaxy we spiral within, the air we breathe, and all life forms as we know them emerge out of timeless geometric codes.

The designs of exalted holy places from the prehistoric monuments at Stonehenge and the Pyramid of Khufu at Giza, to the world's great cathedrals, mosques, and temples are based on these same principles of sacred geometry.

As far back as Greek Mystery schools 2500 years ago it was taught that there are five perfect 3-dimensional forms - the tetrahedron, hexahedron, octahedron, dodecahedron, and icosahedron...collectively known as the Platonic Solids; and that these form the foundation of everything in the physical world.

Modern scholars ridiculed this idea until the 1980's, when Professor Robert Moon at the University of Chicago demonstrated that the entire Periodic Table of Elements - literally everything in the physical world - truly is based on these same five geometric forms. In fact, throughout modern physics, chemistry, and biology, the sacred geometric patterns of creation are today being rediscovered.

The ancients knew that these patterns were codes symbolic of our own inner realm and that the experience of sacred geometry was essential to the education of the soul. Viewing and contemplating these forms can allow us to gaze directly at the face of deep wisdom and glimpse the inner workings of The Universal Mind.

LightSOURCE Arts is dedicated to bringing the power of sacred geometry and the wonderfully patterned beauty of Creation to your life.

Sacred Geometry Home Page by Bruce Rawles

In nature, we find patterns, designs and structures from the most minuscule particles, to expressions of life discernible by human eyes, to the greater cosmos. These inevitably follow geometrical archetypes, which reveal to us the nature of each form and its vibrational resonances. They are also symbolic of the underlying metaphysical principle of the inseparable relationship of the part to the whole. It is this principle of oneness underlying all geometry that permeates the architecture of all form in its myriad diversity. This principle of interconnectedness, inseparability and union provides us with a continuous reminder of our relationship to the whole, a blueprint for the mind to the sacred foundation of all things created. |

The Sphere  |

Starting with what may be the simplest and most perfect of forms, the sphere is an ultimate expression of unity, completeness, and integrity. There is no point of view given greater or lesser importance, and all points on the surface are equally accessible and regarded by the center from which all originate. Atoms, cells, seeds, planets, and globular star systems all echo the spherical paradigm of total inclusion, acceptance, simultaneous potential and fruition, the macrocosm and microcosm. |

The Circle  |

The circle is a two-dimensional shadow of the sphere which is regarded throughout cultural history as an icon of the ineffable oneness; the indivisible fulfillment of the Universe. All other symbols and geometries reflect various aspects of the profound and consummate perfection of the circle, sphere and other higher dimensional forms of these we might imagine.

The ratio of the circumference of a circle to its diameter, Pi, is the original transcendental and irrational number. (Pi equals about 3.14159265358979323846264338327950288419716939937511...) It cannot be expressed in terms of the ratio of two whole numbers, or in the language of sacred symbolism, the essence of the circle exists in a dimension that transcends the linear rationality that it contains. Our holistic perspectives, feelings and intuitions encompass the finite elements of the ideas that are within them, yet have a greater wisdom than can be expressed by those ideas alone. |

The Point  |

At the center of a circle or a sphere is always an infinitesimal point. The point needs no dimension, yet embraces all dimension. Transcendence of the illusions of time and space result in the point of here and now, our most primal light of consciousness. The proverbial "light at the end of the tunnel" is being validated by the ever-increasing literature on so-called "near-death experiences". If our essence is truly spiritual omnipresence, then perhaps the "point" of our being "here" is to recognize the oneness we share, validating all "individuals" as equally precious and sacred aspects of that one.

Life itself as we know it is inextricably interwoven with geometric forms, from the angles of atomic bonds in the molecules of the amino acids, to the helical spirals of DNA, to the spherical prototype of the cell, to the first few cells of an organism which assume vesical, tetrahedral, and star (double) tetrahedral forms prior to the diversification of tissues for different physiological functions. Our human bodies on this planet all developed with a common geometric progression from one to two to four to eight primal cells and beyond.

Almost everywhere we look, the mineral intelligence embodied within crystalline structures follows a geometry unfaltering in its exactitude. The lattice patterns of crystals all express the principles of mathematical perfection and repetition of a fundamental essence, each with a characteristic spectrum of resonances defined by the angles, lengths and relational orientations of its atomic components. |

The Square Root of Two  |

The square root of 2 embodies a profound principle of the whole being more than the sum of its parts. (The square root of two equals about 1.414213562...) The orthogonal dimensions (axes at right angles) form the conjugal union of the horizontal and vertical which give birth to the greater offspring of the hypotenuse. The new generation possesses the capacity for synthesis, growth, integration and reconciliation of polarities by spanning both perspectives equally. The root of two originating from the square leads to a greater unity, a higher expression of its essential truth, faithful to its lineage.

The fact that the root is irrational expresses the concept that our higher dimensional faculties can't always necessarily be expressed in lower order dimensional terms - e.g. "And the light shineth in darkness; and the darkness comprehended it not." (from the Gospel of St. John, Chapter 1, verse 5). By the same token, we have the capacity to surpass the genetically programmed limitations of our ancestors, if we can shift into a new frame of reference (i.e. neutral with respect to prior axes, yet formed from that matrix-seed conjugation. Our dictionary refers to the word matrix both as a womb and an array (or grid lattice). Our language has some wonderful built-in metaphors if we look for them! |

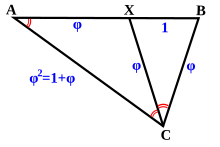

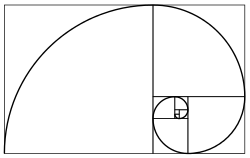

The Golden Ratio  |

The golden ratio (a.k.a. phi ratio a.k.a. sacred cut a.k.a. golden mean a.k.a. divine proportion) is another fundamental measure that seems to crop up almost everywhere, including crops. (The golden ratio is about 1.618033988749894848204586834365638117720309180...) The golden ratio is the unique ratio such that the ratio of the whole to the larger portion is the same as the ratio of the larger portion to the smaller portion. As such, it symbolically links each new generation to its ancestors, preserving the continuity of relationship as the means for retracing its lineage.

The golden ratio (phi) has some unique properties and makes some interesting appearances: |

- phi = phi^2 - 1; therefore 1 + phi = phi^2; phi + phi^2 = phi^3; phi^2 + - phi^3= phi^4; ad infinitum. phi = (1 + square root(5)) / 2 from quadratic formula, 1 + phi = phi^2. phi = 1 + 1/(1 + 1/(1 + 1/(1 + 1/(1 + 1/(1 + 1/...))))) phi = 1 + square root(1 + square root(1 + square root(1 + square root(1 + square root(1 + ...)))))

- phi = (sec 72)/2 =(csc 18)/2 = 1/(2 cos 72) = 1/(2 sin 18) = 2 sin 54 = 2 cos 36 = 2/(csc 54) = 2/ (sec 36) for all you trigonometry enthusiasts.

- phi = the ratio of segments in a 5-pointed star (pentagram) considered sacred to Plato and Pythagoras in their mystery schools. Note that each larger (or smaller) section is related by the phi ratio, so that a power series of the golden ratio raised to successively higher (or lower) powers is automatically generated: phi, phi^2, phi^3, phi^4, phi^5, etc. phi = apothem to bisected base ratio in the Great Pyramid of Giza phi = ratio of adjacent terms of the famous Fibonacci Series evaluated at infinity; the Fibonacci Series is a rather ubiquitous set of numbers that begins with one and one and each term thereafter is the sum of the prior two terms, thus: 1,1,2,3,5,8,13,21,34,55,89,144... (interesting that the 12th term is 12 "raised to a higher power", which appears prominently in a vast collection of metaphysical literature) |

| The mathematician credited with the discovery of this series is Leonardo Pisano Fibonacci and there is a publication devoted to disseminating information about its unique mathematical properties, The Fibonacci Quarterly

Fibonacci ratios appear in the ratio of the number of spiral arms in daisies, in the chronology of rabbit populations, in the sequence of leaf patterns as they twist around a branch, and a myriad of places in nature where self-generating patterns are in effect. The sequence is the rational progression towards the irrational number embodied in the quintessential golden ratio.

This most aesthetically pleasing proportion, phi, has been utilized by numerous artists since (and probably before!) the construction of the Great Pyramid. As scholars and artists of eras gone by discovered (such as Leonardo da Vinci, Plato, and Pythagoras), the intentional use of these natural proportions in art of various forms expands our sense of beauty, balance and harmony to optimal effect. Leonardo da Vinci used the Golden Ratio in his painting of The Last Supper in both the overall composition (three vertical Golden Rectangles, and a decagon (which contains the golden ratio) for alignment of the central figure of Jesus.

The outline of the Parthenon at the Acropolis near Athens, Greece is enclosed by a Golden Rectangle by design.

The Square Root of 3 and the Vesica Piscis

The Vesica Piscis is formed by the intersection of two circles or spheres whose centers exactly touch. This symbolic intersection represents the "common ground", "shared vision" or "mutual understanding" between equal individuals. The shape of the human eye itself is a Vesica Piscis. The spiritual significance of "seeing eye to eye" to the "mirror of the soul" was highly regarded by numerous Renaissance artists who used this form extensively in art and architecture. The ratio of the axes of the form is the square root of 3, which alludes to the deepest nature of the triune which cannot be adequately expressed by rational language alone. |

Spirals |

This spiral generated by a recursive nest of Golden Triangles (triangles with relative side lengths of 1, phi and phi) is the classic shape of the Chambered Nautilus shell. The creature building this shell uses the same proportions for each expanded chamber that is added; growth follows a law which is everywhere the same. The outer triangle is the same as one of the five "arms" of the pentagonal graphic above. |

Toroids |

Rotating a circle about a line tangent to it creates a torus, which is similar to a donut shape where the center exactly touches all the "rotated circles." The surface of the torus can be covered with 7 distinct areas, all of which touch each other; an example of the classic "map problem" where one tries to find a map where the least number of unique colors are needed. In this 3-dimensional case, 7 colors are needed, meaning that the torus has a high degree of "communication" across its surface. The image shown is a "birds-eye" view. |

Dimensionality |

The progression from point (0-dimensional) to line (1-dimensional) to plane (2-dimensional) to space (3-dimensional) and beyond leads us to the question - if mapping from higher order dimensions to lower ones loses vital information (as we can readily observe with optical illusions resulting from third to second dimensional mapping), does our "fixation" with a 3-dimensional space introduce crucial distortions in our view of reality that a higher-dimensional perspective would not lead us to? |

Fractals and Recursive Geometries |

There is a wealth of good literature on this subject; it's always fascinating how nature propagates the same essence regardless of the magnitude of its expression...our spirit is spaceless yet can manifest aspects of its individuality at any scale. |

Perfect Right Triangles |

The 3/4/5, 5/12/13 and 7/24/25 triangles are examples of right triangles whose sides are whole numbers. The graphic above contains several of each of these triangles. The 3/4/5 triangle is contained within the so-called "King's Chamber" of the Great Pyramid, along with the 2/3/root5 and 5/root5/2root5 triangles, utilizing the various diagonals and sides. |

The Platonic Solids |

The 5 Platonic solids (Tetrahedron, Cube or (Hexahedron), Octahedron, Dodecahedron and Icosahedron) are ideal, primal models of crystal patterns that occur throughout the world of minerals in countless variations. These are the only five regular polyhedra, that is, the only five solids made from the same equilateral, equiangular polygons. To the Greeks, these solids symbolized fire, earth, air, spirit (or ether) and water respectively. The cube and octahedron are duals, meaning that one can be created by connecting the midpoints of the faces of the other. The icosahedron and dodecahedron are also duals of each other, and three mutually perpendicular, mutually bisecting golden rectangles can be drawn connecting their vertices and midpoints, respectively. The tetrahedron is a dual to itself. |

The Archimedean Solids |

There are 13 Archimedean solids, each of which are composed of two or more different regular polygons. Interestingly, 5 (Platonic) and 13 (Archimedean) are both Fibonacci numbers, and 5, 12 and 13 form a perfect right triangle. |

Metatron's Cube |

Metatron's Cube contains 2-dimensional images of the Platonic Solids (as shown above) and many other primal forms. |

The Flower of Life |

Indelibly etched on the walls of temple of the Osirion at Abydos, Egypt, the Flower of Life contains a vast Akashic system of information, including templates for the five Platonic Solids. The background graphic for this page is a repetitive hexagonal grid based on the Flower of Life. |

Gravitational singularity |

A gravitational singularity or spacetime singularity is a location where the quantities that are used to measure the gravitational field become infinite in a way that does not depend on the coordinate system. These quantities are the scalar invariant curvatures of spacetime, some of which are a measure of the density of matter.

For the purposes of proving the Penrose-Hawking singularity theorems, a spacetime with a singularity is defined to be one that contains geodesics that cannot be extended in a smooth manner. The end of such a geodesic is considered to be the singularity. This is a different definition, useful for proving theorems.

The two most important types of spacetime singularities are curvature singularities and conical singularities. Singularities can also be divided according to whether they are covered by an event horizon or not (naked singularities). According to general relativity, the initial state of the universe, at the beginning of the Big Bang, was a singularity. Another type of singularity predicted by general relativity is inside a black hole: any star collapsing beyond a certain point would form a black hole, inside which a singularity (covered by an event horizon) would be formed, as all the matter would flow into a certain point (or a circular line, if the black hole is rotating). These singularities are also known as curvature singularities. |

Interpretation  |

Many theories in physics have mathematical singularities of one kind or another. Equations for these physical theories predict that the rate of change of some quantity becomes infinite or increases without limit. This is generally a sign for a missing piece in the theory, as in the Ultraviolet Catastrophe and in renormalization.

In supersymmetry, a singularity in the moduli space happens usually when there are additional massless degrees of freedom in that certain point. Similarly, it is thought that singularities in spacetime often mean that there are additional degrees of freedom that exist only within the vicinity of the singularity. The same fields related to the whole spacetime, also exist; for example, the electromagnetic field. In known examples of string theory, the latter degrees of freedom are related to closed strings, while the degrees of freedom are "stuck" to the singularity and related either to open strings or to the twisted sector of an orbifold.

Some theories, such as the theory of Loop quantum gravity suggest that singularities may not exist. The idea is that due to quantum gravity effects, there is a minimum distance beyond which the force of gravity no longer continue to increase as the distance between the masses become shorter. |

Types  |

Curvature  |

Solutions to the equations of general relativity or another theory of gravity (such as supergravity), often result in encountering points where the metric blows up to infinity. However, many of these points are in fact completely regular. Moreover, the infinities are merely a result of using an inappropriate coordinate system at this point. Thus, in order to test whether there is a singularity at a certain point, one must check whether at this point diffeomorphism invariant quantities (i.e. scalars) become infinite. Such quantities are the same in every coordinate system, so these infinities will not "go away" by a change of coordinates.

An example is the Schwarzschild solution that describes a non-rotating, uncharged black hole. In coordinate systems convenient for working in regions far away from the black hole, a part of the metric becomes infinite at the event horizon. However, spacetime at the event horizon is regular. The regularity becomes evident when changing to another coordinate system (such as the Kruskal coordinates), where the metric is perfectly smooth. On the other hand, in the center of the black hole, where the metric becomes infinite as well, the solutions suggest singularity exists. The existence of the singularity can be verified by noting that the Kretschmann scalar or square of the Riemann tensor, RμνρσRμνρσ, which is diffeomorphism invariant, is infinite. While in a non-rotating black hole the singularity occurs at a single point in the model coordinates, called a "point singularity", in a rotating black hole, also known as a Kerr black hole, the singularity occurs on a ring (a circular line), defined as a "ring singularity". Such a singularity may also theoretically become a wormhole.[1]

More generally, a spacetime is considered singular if it is geodesically incomplete, meaning that there are freely-falling particles whose motion cannot be determined at a finite time at the point of reaching the singularity. For example, any observer below the event horizon of a nonrotating black hole would fall into its center within a finite period of time. The simplest Big Bang cosmological model of the universe contains a causal singularity at the start of time (t=0), where all timelike geodesics have no extensions into the past. Extrapolating backward to this hypothetical time 0 results in a universe of size 0 in all spatial dimensions, infinite density, infinite temperature, and infinite space-time curvature. |

Conical  |

A conical singularity occurs when there is a point where the limit of every diffeomorphism invariant quantity is finite. In which case, spacetime is not smooth at the point of the limit itself. Thus, spacetime looks like a cone around this point, where the singularity is located at the tip of the cone. The metric can be finite everywhere if a suitable coordinate system is used.

An example of such a conical singularity is a cosmic string. |

Naked  |

Until the early 1990s, it was widely believed that general relativity hides every singularity behind an event horizon, making naked singularities impossible. This is referred to as the cosmic censorship hypothesis. However, in 1991 Shapiro and Teukolsky performed computer simulations of a rotating plane of dust that indicated that general relativity might allow for "naked" singularities. What these objects would actually look like in such a model is unknown. Nor is it known whether singularities would still arise if the simplifying assumptions used to make the simulation were removed. |

Entropy  |

Before Stephen Hawking came up with the concept of Hawking radiation, the question of black holes having entropy was avoided. However, this concept demonstrates that black holes can radiate energy, which conserves entropy and solves the incompatibility problems with the second law of thermodynamics. Entropy, however, implies heat and therefore temperature. The loss of energy also suggests that black holes do not last forever, but rather "evaporate" slowly. Small black holes tend to be hotter whereas larger ones tend to be colder. All known black holes are so large that their temperature is far below that of the cosmic background radiation, so they are all gaining energy. They will not begin to lose energy until a cosmological redshift of more than a million is reached, rather than the thousand or so since the background radiation formed. |

Unified field theory  |

This article describes unified field theory as it is currently understood in connection with quantum theory. Earlier attempts based on classical physics are described in the article on classical unified field theories.

There may be no a priori reason why the correct description of nature has to be a unified field theory; however, this goal has led to a great deal of progress in modern theoretical physics and continues to motivate research. Unified field theory is only one possible approach to unification of physics.

|

Introduction  |

According to the current understanding of physics, forces between objects (e.g. gravitation) are not transmitted directly between the two objects, but instead go through intermediary entities called fields. All four of the known fundamental forces are mediated by fields, which in the Standard Model of particle physics result from exchange of bosons (integral- spin particles). Specifically the four interactions to be unified are (from strongest to weakest):

|

History  |

The first successful (classical) unified field theory was developed by James Clerk Maxwell. In 1820 Hans Christian Ørsted discovered that electric currents exerted forces on magnets, while in 1831, Michael Faraday made the observation that time-varying magnetic fields could induce electric currents. Until then, electricity and magnetism had been thought of as unrelated phenomena. In 1864, Maxwell published his famous paper on a dynamical theory of the electromagnetic field. This was the first example of a theory that was able to encompass previous separate field theories (namely electricity and magnetism) to provide a unifying theory of electromagnetism. Later, in his theory of special relativity Albert Einstein was able to explain the unity of electricity and magnetism as a consequence of the unification of space and time into an entity we now call spacetime.

In 1921 Theodor Kaluza extended General Relativity to five dimensions and in 1926 Oscar Klein proposed that the fourth spatial dimension be curled up (or compactified) into a small, unobserved circle. This was dubbed Kaluza-Klein theory. It was quickly noticed that this extra spatial direction gave rise to an additional force similar to electricity and magnetism. This was pursued as the basis for some of Albert Einstein's later unsuccessful attempts at a unified field theory. Einstein and others pursued various non-quantum approaches to unifying these forces; however as quantum theory became generally accepted as fundamental, most physicists came to view all such theories as doomed to failure. |

Modern progress  |

In 1963 American physicist Sheldon Glashow proposed that the weak nuclear force and electricity and magnetism could arise from a partially unified electroweak theory. In 1967, Pakistani Abdus Salam and American Steven Weinberg independently revised Glashow's theory by having the masses for the W particle and Z particle arise through spontaneous symmetry breaking with the Higgs mechanism. This unified theory was governed by the exchange of four particles: the photon for electromagnetic interactions, a neutral Z particle and two charged W particles for weak interaction. As a result of the spontaneous symmetry breaking, the weak force becomes short range and the Z and W bosons acquire masses of 80.4 and 91.2 GeV / c2, respectively. Their theory was first given experimental support by the discovery of weak neutral currents in 1973. In 1983, the Z and W bosons were first produced at CERN by Carlo Rubbia's team. For their insights, Salam, Glashow and Weinberg were awarded the Nobel Prize in Physics in 1979. Carlo Rubbia and Simon van der Meer received the Prize in 1984.

After Gerardus 't Hooft showed the Glashow-Weinberg-Salam electroweak interactions was mathematically consistent, the electroweak theory became a template for further attempts at unifying forces. In 1974, Sheldon Glashow and Howard Georgi proposed unifying the strong and electroweak interactions into Georgi-Glashow model, the first Grand Unified Theory, which would have observable effects for energies much above 100 GeV.

Since then there have been several proposals for Grand Unified Theories, e.g. the Pati-Salam model, although none is currently universally accepted. A major problem for experimental tests of such theories is the energy scale involved, which is well beyond the reach of current accelerators. Grand Unified Theories make predictions for the relative strengths of the strong, weak, and electromagnetic forces, and in 1991 LEP determined that supersymmetric theories have the correct ratio of couplings for a Georgi-Glashow Grand Unified Theory. Many Grand Unified Theories (but not Pati-Salam) predict that the proton can decay, and if this were to be seen, details of the decay products could give hints at more aspects of the Grand Unified Theory. It is at present unknown if the proton can decay, although experiments have determined a lower bound of 1035 years for its lifetime. |

Current status  |

|

Non-mainstream theories  |

Albert Einstein famously spent the last two decades of his life searching for a Unified Field Theory. This has led to a great deal of fascination with the subject and has drawn many people from outside the mainstream of the physics community to work on such a theory. Most of this work typically appears in non-peer reviewed sources, such as self-published books or personal websites. The work that appears outside of the standard scientific channels may or may not be considered pseudoscience by definition.

Examples of "non-mainstream" theories are Heim theory and Antony Garrett Lisi's "An Exceptionally Simple Theory of Everything."

|

Quantum mechanics |

Quantum mechanics (QM) is a set of scientific principles describing the known behavior of energy and matter that predominate at the atomic scale. QM gets its name from the notion of quantum, and the quantum value is the Planck constant. The wave–particle duality of energy and matter at the atomic scale provides a unified view of the behavior of particles such as photons and electrons. While the notion of the photon as a quantum of light energy is commonly understood as a particle of light that has an energy value governed by the Planck constant, what is quantized for an electron is the angular momentum it can have as it is bound in an atomic orbital. When not bound to an atom, an electron's energy is no longer quantized, but it displays, like any other massy particle, a Compton wavelength. While a photon does not have mass, it does have linear momentum. The full significance of the Planck constant is expressed in physics through the abstract mathematical notion of action.

The mathematical formulation of quantum mechanics is abstract and its implications are often non-intuitive. The centerpiece of this mathematical system is the wavefunction. The wavefunction is a mathematical function of time and space that can provide information about the position and momentum of a particle, but only as probabilities, as dictated by the constraints imposed by the uncertainty principle. Mathematical manipulations of the wavefunction usually involve the bra-ket notation, which requires an understanding of complex numbers and linear functionals. Many of the results of QM can only be expressed mathematically and do not have models that are as easy to visualize as those of classical mechanics. For instance, the ground state in quantum mechanical model is a non-zero energy state that is the lowest permitted energy state of a system, rather than a more traditional system that is thought of as simple being at rest with zero kinetic energy.

Overview

The word quantum is Latin for "how great" or "how much." In quantum mechanics, it refers to a discrete unit that quantum theory assigns to certain physical quantities, such as the energy of an atom at rest (see Figure 1, at right). The discovery that particles are discrete packets of energy with wave-like properties led to the branch of physics that deals with atomic and subatomic systems which is today called quantum mechanics. It is the underlying mathematical framework of many fields of physics, including condensed matter physics, solid-state physics, atomic physics, molecular physics, computational chemistry, quantum chemistry, particle physics, and nuclear physics. The foundations of quantum mechanics were established during the first half of the twentieth century by Werner Heisenberg, Max Planck, Louis de Broglie, Albert Einstein, Niels Bohr, Erwin Schrödinger, Max Born, John von Neumann, Paul Dirac, Wolfgang Pauli, David Hilbert, and others. Some fundamental aspects of the theory are still actively studied.

Quantum mechanics is essential to understand the behavior of systems at atomic length scales and smaller. For example, if classical mechanics governed the workings of an atom, electrons would rapidly travel towards and collide with the nucleus, making stable atoms impossible. However, in the natural world the electrons normally remain in an uncertain, non-deterministic "smeared" (wave-particle wave function) orbital path around or "through" the nucleus, defying classical electromagnetism.

Quantum mechanics was initially developed to provide a better explanation of the atom, especially the spectra of light emitted by different atomic species. The quantum theory of the atom was developed as an explanation for the electron's staying in its orbital, which could not be explained by Newton's laws of motion and by Maxwell's laws of classical electromagnetism.

In the formalism of quantum mechanics, the state of a system at a given time is described by a complex wave function (sometimes referred to as orbitals in the case of atomic electrons), and more generally, elements of a complex vector space. This abstract mathematical object allows for the calculation of probabilities of outcomes of concrete experiments. For example, it allows one to compute the probability of finding an electron in a particular region around the nucleus at a particular time. Contrary to classical mechanics, one can never make simultaneous predictions of conjugate variables, such as position and momentum, with accuracy. For instance, electrons may be considered to be located somewhere within a region of space, but with their exact positions being unknown. Contours of constant probability, often referred to as “clouds,” may be drawn around the nucleus of an atom to conceptualize where the electron might be located with the most probability. Heisenberg's uncertainty principle quantifies the inability to precisely locate the particle given its conjugate.

The other exemplar that led to quantum mechanics was the study of electromagnetic waves such as light. When it was found in 1900 by Max Planck that the energy of waves could be described as consisting of small packets or quanta, Albert Einstein further developed this idea to show that an electromagnetic wave such as light could be described by a particle called the photon with a discrete energy dependent on its frequency. This led to a theory of unity between subatomic particles and electromagnetic waves called wave–particle duality in which particles and waves were neither one nor the other, but had certain properties of both. While quantum mechanics describes the world of the very small, it also is needed to explain certain “macroscopic quantum systems” such as superconductors and superfluids.

Broadly speaking, quantum mechanics incorporates four classes of phenomena that classical physics cannot account for: (I) the quantization (discretization) of certain physical quantities, (II) wave-particle duality, (III) the uncertainty principle, and (IV) quantum entanglement. Each of these phenomena is described in detail in subsequent sections.

|

History  |

The history of quantum mechanics[7] began essentially with the 1838 discovery of cathode rays by Michael Faraday, the 1859 statement of the black body radiation problem by Gustav Kirchhoff, the 1877 suggestion by Ludwig Boltzmann that the energy states of a physical system could be discrete, and the 1900 quantum hypothesis by Max Planck that any energy is radiated and absorbed in quantities divisible by discrete "energy elements," E, such that each of these energy elements is proportional to the frequency ν with which they each individually radiate energy, as defined by the following formula: where h is Planck's action constant. Planck insisted[8] that this was simply an aspect of the processes of absorption and emission of radiation and had nothing to do with the physical reality of the radiation itself. However, at that time, this appeared not to explain the photoelectric effect (1839), i.e. that shining light on certain materials can function to eject electrons from the material. In 1905, basing his work on Planck’s quantum hypothesis, Albert Einstein[9] postulated that light itself consists of individual quanta.

In the mid-1920s, developments in quantum mechanics quickly led to it becoming the standard formulation for atomic physics. In the summer of 1925, Bohr and Heisenberg published results that closed the "Old Quantum Theory". Light quanta came to be called photons (1926). From Einstein's simple postulation was born a flurry of debating, theorizing and testing, and thus, the entire field of quantum physics, leading to its wider acceptance at the 1927 5th Solvay Conference. |

Quantum mechanics and classical physics  |

Predictions of quantum mechanics have been verified experimentally to a very high degree of accuracy. Thus, the current logic of correspondence principle between classical and quantum mechanics is that all objects obey laws of quantum mechanics, and classical mechanics is just a quantum mechanics of large systems (or a statistical quantum mechanics of a large collection of particles). Laws of classical mechanics thus follow from laws of quantum mechanics at the limit of large systems or large quantum numbers.[10] However, chaotic systems do not have good quantum numbers, and quantum chaos studies the relationship between classical and quantum descriptions in these systems.

The main differences between classical and quantum theories have already been mentioned above in the remarks on the Einstein-Podolsky-Rosen paradox. Essentially the difference boils down to the statement that quantum mechanics is coherent (addition of amplitudes), whereas classical theories are incoherent (addition of intensities). Thus, such quantities as coherence lengths and coherence times come into play. For microscopic bodies the extension of the system is certainly much smaller than the coherence length; for macroscopic bodies one expects that it should be the other way round. An exception to this rule can occur at extremely low temperatures, when quantum behavior can manifest itself on more "macroscopic" scales (see Bose-Einstein condensate).

This is in accordance with the following observations:

Many “macroscopic” properties of “classic” systems are direct consequences of quantum behavior of its parts. For example, stability of bulk matter (which consists of atoms and molecules which would quickly collapse under electric forces alone), rigidity of this matter, mechanical, thermal, chemical, optical and magnetic properties of this matter—they are all results of interaction of electric charges under the rules of quantum mechanics.

While the seemingly exotic behavior of matter posited by quantum mechanics and relativity theory become more apparent when dealing with extremely fast-moving or extremely tiny particles, the laws of classical “Newtonian” physics still remain accurate in predicting the behavior of surrounding (“large”) objects—of the order of the size of large molecules and bigger—at velocities much smaller than the velocity of light. |

Theory  |

There are numerous mathematically equivalent formulations of quantum mechanics. One of the oldest and most commonly used formulations is the transformation theory proposed by Cambridge theoretical physicist Paul Dirac, which unifies and generalizes the two earliest formulations of quantum mechanics, matrix mechanics (invented by Werner Heisenberg) and wave mechanics (invented by Erwin Schrödinger).

In this formulation, the instantaneous state of a quantum system encodes the probabilities of its measurable properties, or "observables". Examples of observables include energy, position, momentum, and angular momentum. Observables can be either continuous (e.g., the position of a particle) or discrete (e.g., the energy of an electron bound to a hydrogen atom).

Generally, quantum mechanics does not assign definite values to observables. Instead, it makes predictions using probability distributions; that is, the probability of obtaining possible outcomes from measuring an observable. Oftentimes these results are skewed by many causes, such as dense probability clouds[18] or quantum state nuclear attraction. Naturally, these probabilities will depend on the quantum state at the "instant" of the measurement. Hence, uncertainty is involved in the value. There are, however, certain states that are associated with a definite value of a particular observable. These are known as "eigenstates" of the observable ("eigen" can be roughly translated from German as inherent or as a characteristic[21]). In the everyday world, it is natural and intuitive to think of everything (every observable) as being in an eigenstate. Everything appears to have a definite position, a definite momentum, a definite energy, and a definite time of occurrence. However, quantum mechanics does not pinpoint the exact values of a particle for its position and momentum (since they are conjugate pairs) or its energy and time (since they too are conjugate pairs); rather, it only provides a range of probabilities of where that particle might be given its momentum and momentum probability. Therefore, it is helpful to use different words to describe states having uncertain values and states having definite values (eigenstate).

For example, consider a free particle. In quantum mechanics, there is wave-particle duality so the properties of the particle can be described as the properties of a wave. Therefore, its quantum state can be represented as a wave of arbitrary shape and extending over space as a wave function. The position and momentum of the particle are observables. The Uncertainty Principle states that both the position and the momentum cannot simultaneously be measured with full precision at the same time. However, one can measure the position alone of a moving free particle creating an eigenstate of position with a wavefunction that is very large (a Dirac delta) at a particular position x and zero everywhere else. If one performs a position measurement on such a wavefunction, the result x will be obtained with 100% probability (full certainty). This is called an eigenstate of position (mathematically more precise: a generalized position eigenstate (eigendistribution)). If the particle is in an eigenstate of position then its momentum is completely unknown. On the other hand, if the particle is in an eigenstate of momentum then its position is completely unknown. In an eigenstate of momentum having a plane wave form, it can be shown that the wavelength is equal to h/p, where h is Planck's constant and p is the momentum of the eigenstate.

Usually, a system will not be in an eigenstate of the observable we are interested in. However, if one measures the observable, the wavefunction will instantaneously be an eigenstate (or generalized eigenstate) of that observable. This process is known as wavefunction collapse, a debatable process. It involves expanding the system under study to include the measurement device. If one knows the corresponding wave function at the instant before the measurement, one will be able to compute the probability of collapsing into each of the possible eigenstates. For example, the free particle in the previous example will usually have a wavefunction that is a wave packet centered around some mean position x0, neither an eigenstate of position nor of momentum. When one measures the position of the particle, it is impossible to predict with certainty the result. It is probable, but not certain, that it will be near x0, where the amplitude of the wave function is large. After the measurement is performed, having obtained some result x, the wave function collapses into a position eigenstate centered at x.

Wave functions can change as time progresses. An equation known as the Schrödinger equation describes how wave functions change in time, a role similar to Newton's second law in classical mechanics. The Schrödinger equation, applied to the aforementioned example of the free particle, predicts that the center of a wave packet will move through space at a constant velocity, like a classical particle with no forces acting on it. However, the wave packet will also spread out as time progresses, which means that the position becomes more uncertain. This also has the effect of turning position eigenstates (which can be thought of as infinitely sharp wave packets) into broadened wave packets that are no longer position eigenstates.[27] Some wave functions produce probability distributions that are constant or independent of time, such as when in a stationary state of constant energy, time drops out of the absolute square of the wave function. Many systems that are treated dynamically in classical mechanics are described by such "static" wave functions. For example, a single electron in an unexcited atom is pictured classically as a particle moving in a circular trajectory around the atomic nucleus, whereas in quantum mechanics it is described by a static, spherically symmetric wavefunction surrounding the nucleus . (Note that only the lowest angular momentum states, labeled s, are spherically symmetric).

The time evolution of wave functions is deterministic in the sense that, given a wavefunction at an initial time, it makes a definite prediction of what the wavefunction will be at any later time. During a measurement, the change of the wavefunction into another one is not deterministic, but rather unpredictable, i.e., random. A time-evolution simulation can be seen here.

The probabilistic nature of quantum mechanics thus stems from the act of measurement. This is one of the most difficult aspects of quantum systems to understand. It was the central topic in the famous Bohr-Einstein debates, in which the two scientists attempted to clarify these fundamental principles by way of thought experiments. In the decades after the formulation of quantum mechanics, the question of what constitutes a "measurement" has been extensively studied. Interpretations of quantum mechanics have been formulated to do away with the concept of "wavefunction collapse"; see, for example, the relative state interpretation. The basic idea is that when a quantum system interacts with a measuring apparatus, their respective wavefunctions become entangled, so that the original quantum system ceases to exist as an independent entity. For details, see the article on measurement in quantum mechanics. |

Mathematical formulation  |

In the mathematically rigorous formulation of quantum mechanics, developed by Paul Dirac[31] and John von Neumann, the possible states of a quantum mechanical system are represented by unit vectors (called "state vectors") residing in a complex separable Hilbert space (variously called the "state space" or the "associated Hilbert space" of the system) well defined up to a complex number of norm 1 (the phase factor). In other words, the possible states are points in the projectivization of a Hilbert space, usually called the complex projective space. The exact nature of this Hilbert space is dependent on the system; for example, the state space for position and momentum states is the space of square-integrable functions, while the state space for the spin of a single proton is just the product of two complex planes. Each observable is represented by a maximally-Hermitian (precisely: by a self-adjoint) linear operator acting on the state space. Each eigenstate of an observable corresponds to an eigenvector of the operator, and the associated eigenvalue corresponds to the value of the observable in that eigenstate. If the operator's spectrum is discrete, the observable can only attain those discrete eigenvalues.

The time evolution of a quantum state is described by the Schrödinger equation, in which the Hamiltonian, the operator corresponding to the total energy of the system, generates time evolution.

The inner product between two state vectors is a complex number known as a probability amplitude. During a measurement, the probability that a system collapses from a given initial state to a particular eigenstate is given by the square of the absolute value of the probability amplitudes between the initial and final states. The possible results of a measurement are the eigenvalues of the operator - which explains the choice of Hermitian operators, for which all the eigenvalues are real. We can find the probability distribution of an observable in a given state by computing the spectral decomposition of the corresponding operator. Heisenberg's uncertainty principle is represented by the statement that the operators corresponding to certain observables do not commute.

The Schrödinger equation acts on the entire probability amplitude, not merely its absolute value. Whereas the absolute value of the probability amplitude encodes information about probabilities, its phase encodes information about the interference between quantum states. This gives rise to the wave-like behavior of quantum states.

It turns out that analytic solutions of Schrödinger's equation are only available for a small number of model Hamiltonians, of which the quantum harmonic oscillator, the particle in a box, the hydrogen molecular ion and the hydrogen atom are the most important representatives. Even the helium atom, which contains just one more electron than hydrogen, defies all attempts at a fully analytic treatment. There exist several techniques for generating approximate solutions. For instance, in the method known as perturbation theory one uses the analytic results for a simple quantum mechanical model to generate results for a more complicated model related to the simple model by, for example, the addition of a weak potential energy. Another method is the "semi-classical equation of motion" approach, which applies to systems for which quantum mechanics produces weak deviations from classical behavior. The deviations can be calculated based on the classical motion. This approach is important for the field of quantum chaos.

An alternative formulation of quantum mechanics is Feynman's path integral formulation, in which a quantum-mechanical amplitude is considered as a sum over histories between initial and final states; this is the quantum-mechanical counterpart of action principles in classical mechanics.

|

Interactions with other scientific theories  |

The fundamental rules of quantum mechanics are very deep. They assert that the state space of a system is a Hilbert space and the observables are Hermitian operators acting on that space, but do not tell us which Hilbert space or which operators, or if it even exists. These must be chosen appropriately in order to obtain a quantitative description of a quantum system. An important guide for making these choices is the correspondence principle, which states that the predictions of quantum mechanics reduce to those of classical physics when a system moves to higher energies or equivalently, larger quantum numbers. In other words, classic mechanics is simply a quantum mechanics of large systems. This "high energy" limit is known as the classical or correspondence limit. One can therefore start from an established classical model of a particular system, and attempt to guess the underlying quantum model that gives rise to the classical model in the correspondence limit.

When quantum mechanics was originally formulated, it was applied to models whose correspondence limit was non-relativistic classical mechanics. For instance, the well-known model of the quantum harmonic oscillator uses an explicitly non-relativistic expression for the kinetic energy of the oscillator, and is thus a quantum version of the classical harmonic oscillator.

Early attempts to merge quantum mechanics with special relativity involved the replacement of the Schrödinger equation with a covariant equation such as the Klein-Gordon equation or the Dirac equation. While these theories were successful in explaining many experimental results, they had certain unsatisfactory qualities stemming from their neglect of the relativistic creation and annihilation of particles. A fully relativistic quantum theory required the development of quantum field theory, which applies quantization to a field rather than a fixed set of particles. The first complete quantum field theory, quantum electrodynamics, provides a fully quantum description of the electromagnetic interaction.

The full apparatus of quantum field theory is often unnecessary for describing electrodynamic systems. A simpler approach, one employed since the inception of quantum mechanics, is to treat charged particles as quantum mechanical objects being acted on by a classical electromagnetic field. For example, the elementary quantum model of the hydrogen atom describes the electric field of the hydrogen atom using a classical Coulomb potential. This "semi-classical" approach fails if quantum fluctuations in the electromagnetic field play an important role, such as in the emission of photons by charged particles.

Quantum field theories for the strong nuclear force and the weak nuclear force have been developed. The quantum field theory of the strong nuclear force is called quantum chromodynamics, and describes the interactions of the subnuclear particles: quarks and gluons. The weak nuclear force and the electromagnetic force were unified, in their quantized forms, into a single quantum field theory known as electroweak theory, by the physicists Carl Jamieson, Sheldon Glashow and Steven Weinberg.

It has proven difficult to construct quantum models of gravity, the remaining fundamental force. Semi-classical approximations are workable, and have led to predictions such as Hawking radiation. However, the formulation of a complete theory of quantum gravity is hindered by apparent incompatibilities between general relativity, the most accurate theory of gravity currently known, and some of the fundamental assumptions of quantum theory. The resolution of these incompatibilities is an area of active research, and theories such as string theory are among the possible candidates for a future theory of quantum gravity.

In the 21st century classical mechanics has been extended into the complex domain and complex classical mechanics exhibits behaviours very similar to quantum mechanics.

|

|

The particle in a 1-dimensional potential energy box is the most simple example where restraints lead to the quantization of energy levels. The box is defined as zero potential energy inside a certain interval and infinite everywhere outside that interval. For the 1-dimensional case in the x direction, the time-independent Schrödinger equation can be written as:

Attempts at a unified field theory

As of 2010 the quest for unifying the fundamental forces through quantum mechanics is still ongoing. Quantum electrodynamics (or "quantum electromagnetism"), which is currently the most accurately tested physical theory,[35] has been successfully merged with the weak nuclear force into the electroweak force and work is currently being done to merge the electroweak and strong force into the electrostrong force. Current predictions state that at around 1014 GeV the three aforementioned forces are fused into a single unified field,[36] Beyond this "grand unification", it is speculated that it may be possible to merge gravity with the other three gauge symmetries, expected to occur at roughly 1019 GeV. However - and while special relativity is parsimoniously incorporated into quantum electrodynamics - the expanded general relativity, currently the best theory describing the gravitation force, has not been fully incorporated into quantum theory. |

Relativity and quantum mechanics  |

Even with the defining postulates of both Einstein's theory of general relativity and quantum theory being indisputably supported by rigorous and repeated empirical evidence and while they do not directly contradict each other theoretically (at least with regard to primary claims), they are resistant to being incorporated within one cohesive model.

Einstein himself is well known for rejecting some of the claims of quantum mechanics. While clearly contributing to the field, he did not accept the more philosophical consequences and interpretations of quantum mechanics, such as the lack of deterministic causality and the assertion that a single subatomic particle can occupy numerous areas of space at one time. He also was the first to notice some of the apparently exotic consequences of entanglement and used them to formulate the Einstein-Podolsky-Rosen paradox, in the hope of showing that quantum mechanics had unacceptable implications. This was 1935, but in 1964 it was shown by John Bell (see Bell inequality) that Einstein's assumption was correct, but had to be completed by hidden variables and thus based on wrong philosophical assumptions. According to the paper of J. Bell and the Copenhagen interpretation (the common interpretation of quantum mechanics by physicists since 1927), and contrary to Einstein's ideas, quantum mechanics was

- neither a "realistic" theory (since quantum measurements do not state pre-existing properties, but rather they prepare properties)

The Einstein-Podolsky-Rosen paradox shows in any case that there exist experiments by which one can measure the state of one particle and instantaneously change the state of its entangled partner, although the two particles can be an arbitrary distance apart; however, this effect does not violate causality, since no transfer of information happens. These experiments are the basis of some of the most topical applications of the theory, quantum cryptography, which has been on the market since 2004 and works well, although at small distances of typically 1000 km.

Gravity is negligible in many areas of particle physics, so that unification between general relativity and quantum mechanics is not an urgent issue in those applications. However, the lack of a correct theory of quantum gravity is an important issue in cosmology and physicists' search for an elegant "theory of everything". Thus, resolving the inconsistencies between both theories has been a major goal of twentieth- and twenty-first-century physics. Many prominent physicists, including Stephen Hawking, have labored in the attempt to discover a theory underlying everything, combining not only different models of subatomic physics, but also deriving the universe's four forces —the strong force, electromagnetism, weak force, and gravity— from a single force or phenomenon. One of the leaders in this field is Edward Witten, a theoretical physicist who formulated the groundbreaking M-theory, which is an attempt at describing the supersymmetrical based string theory. |

Applications  |

Quantum mechanics has had enormous success in explaining many of the features of our world. The individual behaviour of the subatomic particles that make up all forms of matter—electrons, protons, neutrons, photons and others—can often only be satisfactorily described using quantum mechanics. Quantum mechanics has strongly influenced string theory, a candidate for a theory of everything (see reductionism) and the multiverse hypothesis. It is also related to statistical mechanics.

Quantum mechanics is important for understanding how individual atoms combine covalently to form chemicals or molecules. The application of quantum mechanics to chemistry is known as quantum chemistry. (Relativistic) quantum mechanics can in principle mathematically describe most of chemistry. Quantum mechanics can provide quantitative insight into ionic and covalent bonding processes by explicitly showing which molecules are energetically favorable to which others, and by approximately how much. Most of the calculations performed in computational chemistry rely on quantum mechanics.

Much of modern technology operates at a scale where quantum effects are significant. Examples include the laser, the transistor (and thus the microchip), the electron microscope, and magnetic resonance imaging. The study of semiconductors led to the invention of the diode and the transistor, which are indispensable for modern electronics.

Researchers are currently seeking robust methods of directly manipulating quantum states. Efforts are being made to develop quantum cryptography, which will allow guaranteed secure transmission of information. A more distant goal is the development of quantum computers, which are expected to perform certain computational tasks exponentially faster than classical computers. Another active research topic is quantum teleportation, which deals with techniques to transmit quantum states over arbitrary distances.

Quantum tunneling is vital in many devices, even in the simple light switch, as otherwise the electrons in the electric current could not penetrate the potential barrier made up of a layer of oxide. Flash memory chips found in USB drives use quantum tunneling to erase their memory cells.

QM primarily applies to the atomic regimes of matter and energy, but some systems exhibit quantum mechanical effects on a large scale; superfluidity (the frictionless flow of a liquid at temperatures near absolute zero) is one well-known example. Quantum theory also provides accurate descriptions for many previously unexplained phenomena such as black body radiation and the stability of electron orbitals. It has also given insight into the workings of many different biological systems, including smell receptors and protein structures.[40] Even so, classical physics often can be a good approximation to results otherwise obtained by quantum physics, typically in circumstances with large numbers of particles or large quantum numbers. (However, some open questions remain in the field of quantum chaos.) |

Philosophical Consequences  |

The Copenhagen interpretation, due largely to the Danish theoretical physicist Niels Bohr, is the interpretation of quantum mechanics most widely accepted amongst physicists. According to it, the probabilistic nature of quantum mechanics predictions cannot be explained in terms of some other deterministic theory, and does not simply reflect our limited knowledge. Quantum mechanics provides probabilistic results because the physical universe is itself probabilistic rather than deterministic.

Albert Einstein, himself one of the founders of quantum theory, disliked this loss of determinism in measurement (this dislike is the source of his famous quote, "God does not play dice with the universe."). Einstein held that there should be a local hidden variable theory underlying quantum mechanics and that, consequently, the present theory was incomplete. He produced a series of objections to the theory, the most famous of which has become known as the Einstein-Podolsky-Rosen paradox. John Bell showed that the EPR paradox led to experimentally testable differences between quantum mechanics and local realistic theories. Experiments have been performed confirming the accuracy of quantum mechanics, thus demonstrating that the physical world cannot be described by local realistic theories.[41] The Bohr-Einstein debates provide a vibrant critique of the Copenhagen Interpretation from an epistemological point of view.

The Everett many-worlds interpretation, formulated in 1956, holds that all the possibilities described by quantum theory simultaneously occur in a multiverse composed of mostly independent parallel universes.[42] This is not accomplished by introducing some new axiom to quantum mechanics, but on the contrary by removing the axiom of the collapse of the wave packet: All the possible consistent states of the measured system and the measuring apparatus (including the observer) are present in a real physical (not just formally mathematical, as in other interpretations) quantum superposition. Such a superposition of consistent state combinations of different systems is called an entangled state.

While the multiverse is deterministic, we perceive non-deterministic behavior governed by probabilities, because we can observe only the universe, i.e. the consistent state contribution to the mentioned superposition, we inhabit. Everett's interpretation is perfectly consistent with John Bell's experiments and makes them intuitively understandable. However, according to the theory of quantum decoherence, the parallel universes will never be accessible to us. This inaccessibility can be understood as follows: Once a measurement is done, the measured system becomes entangled with both the physicist who measured it and a huge number of other particles, some of which are photons flying away towards the other end of the universe; in order to prove that the wave function did not collapse one would have to bring all these particles back and measure them again, together with the system that was measured originally. This is completely impractical, but even if one could theoretically do this, it would destroy any evidence that the original measurement took place (including the physicist's memory).

|

|

Double slit experiment

Hexagon with hexagram inside

Tetrahedron with the sheres

OM

Octave

Sextagon with six pointed light

Torus in the sphere

Full pet

Flower of life in the sphere

Edge star

3D star tetrahedron

512

Filled sphere

Hollow sphere

Nosphere

Low pressure system over iceland

Swastika |

|

2 Art

Circles, Triangles and squares